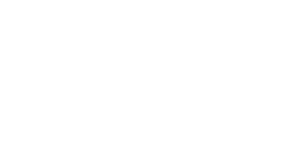

FYI: Lo, though AI walks through the Uncanny Valley…

Unless you’ve been living under a pixel for the last few years, you cannot have avoided the discussion about AI on both a technological level and a moral one. We’re decades beyond the tales of the Terminator and other fictional ‘rise of the machines’ cinematic experiences and in a place where, in real life, computers and artificial intelligence are part of our daily lives, there’s even examples of self-contained systems that don’t need human decision-making. (A recent study concerning AI also showed computers lying to operators…so, hey, the jury is still out, though you could always ask your Alexa..?). Sentient, duplicitous machines would obviously have repercussions with global implications, but even in ‘smaller’ more intimate arenas, such as art… ah, well there’s the rub. In illustration and moving pictures, is less a maniacal AI just another dynamic asset to draw from the toolbox or a lazy shortcut?

Actual Armageddon not withstanding, one of the biggest everyday arguments about AI is on the creative side. The likes of Marvel Comics and DC Comics have now formally acknowledged policies that they will not hire ‘creatives’ that use AI to create that content. Though there are elements of pragmatic quality control in that position, it’s largely down to one of the key aspects of AI, that it ‘scrapes’ existing artwork and amalgamates it into something else without credit and – far too often – without even seeking permission. (But it’s also the comics industry noting the public reaction to this – after all, neither company seems to react quite as vigorously when some of their artists actively trace material). Ask an AI service to render something in the style of an existing creator and within seconds you can have it… but that original creator doesn’t see a penny and probably never knew his/her name was in a database that’s being sought through to produce the ‘new’ art. Equally, legitimate work by artists who have perfected their craft over the years are sometimes wrongly accused. It’s a potential and sometimes subjective minefield.

Actual Armageddon not withstanding, one of the biggest everyday arguments about AI is on the creative side. The likes of Marvel Comics and DC Comics have now formally acknowledged policies that they will not hire ‘creatives’ that use AI to create that content. Though there are elements of pragmatic quality control in that position, it’s largely down to one of the key aspects of AI, that it ‘scrapes’ existing artwork and amalgamates it into something else without credit and – far too often – without even seeking permission. (But it’s also the comics industry noting the public reaction to this – after all, neither company seems to react quite as vigorously when some of their artists actively trace material). Ask an AI service to render something in the style of an existing creator and within seconds you can have it… but that original creator doesn’t see a penny and probably never knew his/her name was in a database that’s being sought through to produce the ‘new’ art. Equally, legitimate work by artists who have perfected their craft over the years are sometimes wrongly accused. It’s a potential and sometimes subjective minefield.

Move from the page to the screen and it doesn’t get any less controversial, though it’s something of a moving target…

“It’s now AI’s turn to be the bad guy. But if you ask any VFX artist, it’s just another tool. And an amazing one at that. It can do practically anything in a fraction of the time… for those who have embraced it, it’s not taking our jobs, it’s letting us do our jobs faster and better…” – Bob Chapin.

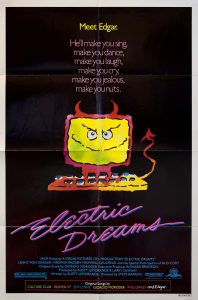

“AI is already an everyday factor. As a VFX artist, I’ve been using it for years in one form or another. And I’m now seeing studios dumping tons of money into AI development. I think the major disadvantage of AI content in general right now is public acceptance. You can create an amazing piece of art, whether it’s an image, video, or music, regardless of whether it’s for an ad, commercial or film, and people will judge it based on how it was created and not the end result. And it’s not just a dislike, it’s a deep-seated seething hatred for a machine that is ‘stealing jobs and the soul of artists while destroying the planet‘. This is no different from 30 years ago when CG was the bad guy that was going to replace all actors, stuntmen, extras and practical effects artists. The consensus among most people was that it looked like crap and no one would ever watch a CG movie,” notes Bob Chapin, now a multi-hyphenate in acting, stunts and major VFX work on the likes of Armageddon, Star Trek: Picard and Star Wars: The Force Awakens. “But to a budding VFX artist at 18, TRON was my gateway drug. I wanted to do that, even though there were no classes anywhere, no software, no internet, and no YouTube videos. Just me and my lil’ TRS-80. And after years of seeing CG improve with mind-boggling technology, learning all the software, and watching as the public slowly learned to embrace the medium, cheering their favorite superheroes brought to life or creating an academy award winning film using free software on a laptop, while hiring millions of artists around the world creating a thriving new industry, it’s now AI’s turn to be the bad guy. But if you ask any VFX artist, it’s just another tool. And an amazing one at that. It can do practically anything in a fraction of the time… for those who have embraced it, it’s not taking our jobs, it’s letting us do our jobs faster and better…”

“AI is already an everyday factor. As a VFX artist, I’ve been using it for years in one form or another. And I’m now seeing studios dumping tons of money into AI development. I think the major disadvantage of AI content in general right now is public acceptance. You can create an amazing piece of art, whether it’s an image, video, or music, regardless of whether it’s for an ad, commercial or film, and people will judge it based on how it was created and not the end result. And it’s not just a dislike, it’s a deep-seated seething hatred for a machine that is ‘stealing jobs and the soul of artists while destroying the planet‘. This is no different from 30 years ago when CG was the bad guy that was going to replace all actors, stuntmen, extras and practical effects artists. The consensus among most people was that it looked like crap and no one would ever watch a CG movie,” notes Bob Chapin, now a multi-hyphenate in acting, stunts and major VFX work on the likes of Armageddon, Star Trek: Picard and Star Wars: The Force Awakens. “But to a budding VFX artist at 18, TRON was my gateway drug. I wanted to do that, even though there were no classes anywhere, no software, no internet, and no YouTube videos. Just me and my lil’ TRS-80. And after years of seeing CG improve with mind-boggling technology, learning all the software, and watching as the public slowly learned to embrace the medium, cheering their favorite superheroes brought to life or creating an academy award winning film using free software on a laptop, while hiring millions of artists around the world creating a thriving new industry, it’s now AI’s turn to be the bad guy. But if you ask any VFX artist, it’s just another tool. And an amazing one at that. It can do practically anything in a fraction of the time… for those who have embraced it, it’s not taking our jobs, it’s letting us do our jobs faster and better…”

Bob notes that, as in any industry, any major shift in creative forces and new technology and its application produces mixed reactions and results with both huge possibilities and viable concerns.

“Even though it’s not perfect yet (prompting can be a ridiculous exercise in futility), we can all see the writing on the wall. And just like it’s easy to spot bad CG because it’s bad, same goes for AI. It’s easy to spot ‘slop‘ but you have no idea how much stuff is being done that is completely invisible,” he continues. “And folks should know that all generative AI is not created equal. Just like artists, there are some who steal with reckless abandon. There are others who are experts at mashing up genres (Star Wars is Kurosawa’s Hidden Fortress in space with a score lifted from Korngold’s King’s Row). And then there are artists who actively pay others to use their work. The AI analog for this is Adobe’s Firefly software which has changed their model based on public demand, ethically sourcing their material from public domain and paying for stock footage so as not to infringe on copyrights…”

Green-screening and considerable post-production work have been part of movies and genre television for years, but the event horizon sometimes shifts and businesses hedge their hedge-funds. Last year Disney – which had entered into a $1 billion partnership with OpenAI – quickly became aware of the controversies on various levels and exited the deal, deciding that public sentiment and financial rewards aren’t always perfectly aligned. OpenAI also announced they were shutting down their Sora AI video app – so clearly concerns still exist that AI isn’t just performance capture in second-hand clothing.

It’s also interesting to note that Take Two Interactive, the makers of another top game GTA (Grand Theft Auto) just controversially eliminated its entire AI team in a move described as the ‘shifting priorities of upper management’. Strauss Zelnick (the company’s Chairman and CEO) seems conflicted, depending on what quotes are attributed to him. He’s said: ” There is no creativity that can exist, by definition, in any AI model because it is data-driven…” but also “This company’s products have always been built with machine learning and artificial intelligence. We’ve always been a leader in this space….AI tools are driving cost and time efficiencies… ”

(Perhaps more succinctly he’s also said: “…with regards to GTA6, Generative AI has zero part in what [parent company] Rockstar Games is building. Their worlds are handcrafted, that’s what differentiates them…tools don’t replace creativity and tools are not projects, they’re different things.” That’s certainly expert threading of a corporate needle…)

Chapin notes that if you accept that AI is here to stay in one form or another, it does not negate the need for guard-rails or enforcing laws that already exist – even if such legal requirements may have to be tweaked to keep up.

“The biggest advantage I see as AI gets better, is that we will see amazing filmmakers come out of nowhere. I’m interested to see where this goes and the opportunities generative AI will bring to undiscovered talent that would’ve previously been overlooked. As for guard rails, we will certainly see our share of growing pains. Rampant copyright infringement reminds me of the early days of Napster. Everyone was doing it and it took decades to get that into check. I appreciate that some AI companies have put guardrails on what can be created, but I feel that copyright infringement is focusing on the wrong side of things. We already have tons of copyright law in the books – exactly how much you can use from protected works. But even this can be subjective at times. I imagine that using AI, we will be able to specify exactly how much influence constitutes a copyright violation. Note that regardless of whatever guardrails are out there, stand-alone programs like Stable Diffusion and ComfyUI live on millions of home computers. And it’s the wild west out there with absolutely no rules or regulations. And of course that includes porn, which ironically has been one of the biggest influences driving tech innovation for decades. ”

VFX will likely be the first to feel the effects, but what about actors – some of whom have already had their likenesses scanned in so they won’t be needed for reshoots or post-humous projects, some performers have had to scrutinise their contracts very carefully! (On the flipside, Val Kilmer hoped to star in a historical feature called As Deep as the Grave but ironically succumbed to cancer in 2025 before he could film a single scene. Normally, writer and director Coerte Voorhees would have had to replace the actor and do reshoots, but they couldn’t afford to reshoot the scenes with other actors who had already completed their work or to scrap the movie entirely over the loss. However, Kilmer will now still appear in the forthcoming film with Voorhees recreating Kilmer’s presence via AI with the full support of his family and estate. The film’s cast will include the very much alive Abigail Breslin, Finn Jones, Wes Studi and Ewen Bremner).

VFX will likely be the first to feel the effects, but what about actors – some of whom have already had their likenesses scanned in so they won’t be needed for reshoots or post-humous projects, some performers have had to scrutinise their contracts very carefully! (On the flipside, Val Kilmer hoped to star in a historical feature called As Deep as the Grave but ironically succumbed to cancer in 2025 before he could film a single scene. Normally, writer and director Coerte Voorhees would have had to replace the actor and do reshoots, but they couldn’t afford to reshoot the scenes with other actors who had already completed their work or to scrap the movie entirely over the loss. However, Kilmer will now still appear in the forthcoming film with Voorhees recreating Kilmer’s presence via AI with the full support of his family and estate. The film’s cast will include the very much alive Abigail Breslin, Finn Jones, Wes Studi and Ewen Bremner).

Will it be able to fully navigate the ‘uncanny valley’ – the aspect of virtual design and animation that, however good, still, somehow, fails to fool the viewer or faultlessly emulate real life. Some will scoff, but there’s probably not a single person reading this that hasn’t been fooled, even momentarily, by an artificial image, especially on smaller screens. And it will get ever harder to do so.

The ‘can’ and ‘should’ aspects continue. In February film-maker Ruairi Robinson, noted that a two-sentence ‘prompt’ into the seedance 2 software produced a hyper-realistic sequence of Brad Pitt and Tom Cruise – in what could clearly pass for a genuine feature-film clip – and he noted “I hate to say it. It’s likely over for us…” adding later “In next to no time, one person is going to be able to sit at a computer and create a movie indistinguishable from what Hollywood now releases. True, if that person is no good, it will suck. But if that person possesses Christopher Nolan’s talent and taste (and someone like that will rapidly come along), it will be tremendous…”

The ‘can’ and ‘should’ aspects continue. In February film-maker Ruairi Robinson, noted that a two-sentence ‘prompt’ into the seedance 2 software produced a hyper-realistic sequence of Brad Pitt and Tom Cruise – in what could clearly pass for a genuine feature-film clip – and he noted “I hate to say it. It’s likely over for us…” adding later “In next to no time, one person is going to be able to sit at a computer and create a movie indistinguishable from what Hollywood now releases. True, if that person is no good, it will suck. But if that person possesses Christopher Nolan’s talent and taste (and someone like that will rapidly come along), it will be tremendous…”

It was the tip of an iceberg with some studios worrying if they were the Titanic and immediately consulting the status of lawyers and lifeboats. And in the last few weeks alone, the internet has seen a wave of numerous AI works teasing real upcoming projects with fake or mix-matched imagery. Avengers: Doomsday, Doctor Who, Highlander… all projects on the legitimate radar, but here given a faux-gloss treatment. In the past a studio might turn a blind eye to non-authorised fan activity and self-made material, noting that ‘any publicity is good publicity‘, but with the resolution getting better and with it often hard to tell what’s real or not, there’s a certain amount of damage control and more overt copyright protection.

But as much as the moral arguments can often hold weight, the pragmatic side of things suggests that it’s already too late to completely reverse course. You can (and should) condemn those who take and reproduce without credit – and sometimes badly – but if such tech can be used with permissions is the authorised shortcut suddenly legitimate?

Take two examples…

Zack London, aka the LA-based film-maker and force behind the online ‘Gossip Goblin‘ identity has been making a name for himself with weird and wonderful AI-generated visuals that can realistically compete with many of the VFX-houses. In a recent profile, the Hollywood Reporter noted that Gossip Goblin had ‘1 million followers on Instagram and millions more views across platforms...’ Turning to his/their first feature (actually a 20-minute short), The Patchwright is about to launch on 12th April (THE PATCHWRIGHT | Sci-Fi Short Film), showing that the ideas can take place over a longer narrative. The story takes place in a Blade Runner-like city full of the bizarre characters for which London is known. It avoids the more obvious lower-level ‘slop’ factor where the collecting of images to illustrate your stories and ideas is often inconsistent and aimed at and below the disposable and less-discerning.

Zack London, aka the LA-based film-maker and force behind the online ‘Gossip Goblin‘ identity has been making a name for himself with weird and wonderful AI-generated visuals that can realistically compete with many of the VFX-houses. In a recent profile, the Hollywood Reporter noted that Gossip Goblin had ‘1 million followers on Instagram and millions more views across platforms...’ Turning to his/their first feature (actually a 20-minute short), The Patchwright is about to launch on 12th April (THE PATCHWRIGHT | Sci-Fi Short Film), showing that the ideas can take place over a longer narrative. The story takes place in a Blade Runner-like city full of the bizarre characters for which London is known. It avoids the more obvious lower-level ‘slop’ factor where the collecting of images to illustrate your stories and ideas is often inconsistent and aimed at and below the disposable and less-discerning.

Many of London’s works deal with grotesques and exaggerated characters, combining organic and technology in ways that could invoke dreams or nightmares or certainly make Terry Gilliam proud. That being said, there’s also material such as The Departure that shows a more poignant side and suggests just how many different types of stories could be told beyond the obvious. You can also get a taste for his style here…

Secondly, looking at the lucrative on-your-phone market and beyond is Spore-Fall. You may not have heard of it yet, but it’s likely you’re about to – or of something similar.

Singapore’s Edenstone Group (‘…an AI-powered narrative studio crafting immersive, and tech-powered IPs for the global entertainment marketplace‘) launched Spore-Fall as a ten-episode micro-drama, a story about a disillusioned soldier and a rogue medic in the fractured future Asian city of Lionara. A mysterious spore pathogen breaks out in the city leading to dramatic reactions, but is it destroying humanity or helping it evolve? The story is written by humans, the visual-side is created – and openly marketed – as AI.

Spore-Fall isn’t perfect and it’s hard to judge the potency of the full IP itself from such small servings. (The ten episodes will be released between April to June). Some scenes work better than others and the audio still feels unnatural, but its deliberate micro-sized instalments (each running to a few minutes at most) do feel more like an inevitable statement of intent and a proof-of-life example for a new side of entertainment flexing its fledgling muscles. It’s reminiscent of reassembling some cut-scenes from computer games (the optional sequences that you don’t control but watch as connective tissue before taking control again) and stitching them together to varying effect.

The gaming world itself has come a long way in just the last decade in upgraded graphics (take for example the difference between the original launch versions of both The Last of Us games and their later ‘Remastered‘ versions. That visual upgrades used in games would spill out into other media was always a given – it would be just how and when. There’s is a sense of graphic evolution and practical world-building that was always going to expand its horizons in some way. The quality and reach of such hi-res imagery might not be ‘the world outside your door’ as yet, but some of it definitely has your zip-code…

“That visual upgrades used in games would spill out into other media was always a given – it would be just how and when. There’s a sense of graphic evolution and practical world-building that was always going to expand its horizons. Such imagery might not be the world outside your door as yet, but some of it definitely has your zip-code…”

The quality and format of these micro-episodes work less well on a larger-screened desktop, but if you have a smart-phone or tablet and a good enough connection, then it’s do-able and the vertical format of the screen gives away that these are the specific, on-the-move devices that Edenstone Group (and many industry pundits) believe the future and a significant revenue stream will lead. In March industry-site Variety also reported that the company now had even greater ambitions – expanding their IP into a multi-platform franchise spanning film (with a touted 2028 release date), games, and collectibles. That’s quite an ambitious slate to propose, but it’s also a sign of the times that it’s even an option.

Chapin continues to lean into pragmatism and notes that the very idea that a person with very little tech-savvy experience but a ton of ideas now has a way to give them form… and that’s a very hard kind of wish-fulfilment from which to pull back. As with many online elements, including social-media, a dual-balance of public demand and public scrutiny will likely decide the future. A faux clip of Pitt and Cruise may be entertaining, but does speak to the question, what else can be faked and for what reason – or what could be claimed to be fake when it’s not?

“Generative AI will have the power to create whatever you can imagine. We’ve already seen it. You want to see a Matrix reboot with the cast of the Muppets? Done. You want to put yourself into the lead role of Raiders of the Lost Ark? Done. Unfortunately, this also comes with the power to create deadly propaganda, which we’re already starting to see. And the only defense we have against that is critical thinking and reliable sources of information, which will become essential. Regardless, generative AI isn’t going anywhere anytime soon…”

As always, the entertainment industry and politics are said to be ultimately driven by the will of the people. If that’s still true, then the question becomes not whether AI can produce ever-more-realistic output or, perhaps, even whether it should… the question will be, how closely we pay attention when it does and where we decide to draw its boundaries… rather than the other way around.

Main Images: Spore-Fall (Edenstone Group) and Patchwright (Gossip Goblin)…

John Mosby